While many deep learning-based methods have been developed to enable 3D instance segmentation, such as SGPN and ASIS, it is still interesting to see how some traditional methods can perform on this task, for example, using 3D shape contexts. The shape context is a kind of regional point descriptor that measures the similarity between shapes by calculating the point-wise correspondences between a query shape and a reference shape, and the 3D shape context is its extension to 3D shapes.

The basic idea of doing instance segmentation leveraging 3D shape contexts is that we iteratively find and remove the best matching shape of each query so as to retrieve the individual instance shapes. This enables a simple form of instance segmentation. For example, using this method, we can retrieve all the instances of straight leg chairs from a room as input, as shown in Figure 1.

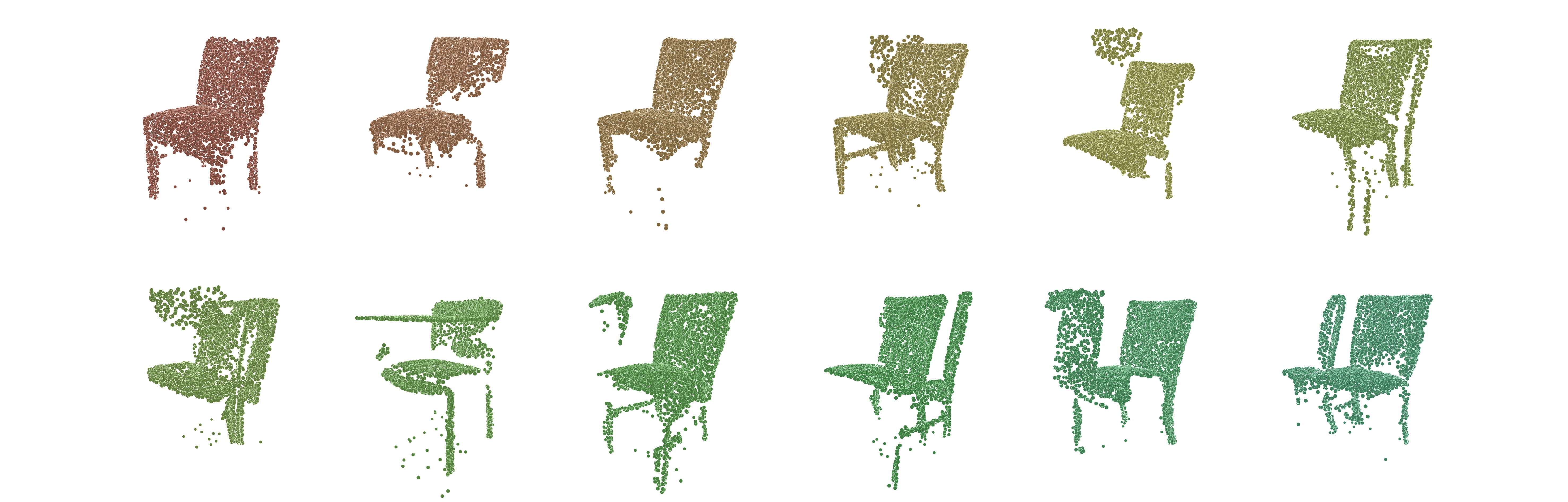

In Figure 2 we can take a close look at the retrieved chair instances.

More details about this experiment can be found in this report.